SPEC CPU®2026 Overview / What's New?

This document introduces the SPEC CPU®2026 Benchmark Suite via a series of questions and answers. The SPEC CPU 2026 Benchmark Suite

is a product of the SPEC® non-profit corporation (about SPEC).

Benchmarks; good, bad, difficult, and standard

Q1. What is SPEC?

SPEC is the Standard Performance Evaluation Corporation, a non-profit organization

founded in 1988 to establish standardized performance benchmarks that are objective, meaningful, clearly defined, and

readily available. SPEC members include hardware and software vendors, universities, and researchers. [About SPEC]

SPEC was founded on the realization that "An ounce of honest data is worth a pound of marketing hype".

Q2. What is a (good) benchmark?

A surveyor's bench mark (two words) defines a known reference point by which other locations

may be measured.

A computer benchmark performs a known set of operations by which computer performance can be

measured.

| Table 1: Characteristics of useful performance benchmarks |

| Specifies a workload |

A strictly-defined set of operations to be performed. |

| Produces at least one metric |

A numeric representation of performance. Common metrics include:

- Time - For example, seconds to complete the workload.

- Throughput - Work completed per unit of time, for example, jobs per hour.

|

| Is reproducible |

If repeated, will report similar (*) metrics. |

| Is portable |

Can be run on a variety of interesting systems. |

| Is comparable |

If the metric is reported for multiple systems, the values are meaningful and useful. |

| Checks for correct operation |

Verify that meaningful output is generated and that the work is actually done.

"I can make it run as

fast as you like if you remove the constraint of getting correct answers." (**) |

| Has run rules |

A clear definition of required and forbidden hardware, software, optimization, tuning, and procedures. |

|

(*) "Similar" performance will depend on context. The benchmark

should include guidelines as to what variation one should expect if the benchmark is run multiple

times.

(**) Author unknown. If you know who said it first, write. |

Q3. What are some common benchmarking mistakes?

Creating high-quality benchmarks takes time and effort. There are some difficulties that need to be avoided.

The difficulties listed in the table are based on real examples, and the solutions are what SPEC CPU tries to

do about them.

| If the benchmark description says: |

There may be potential difficulties: |

Solutions |

1. It runs Loop 1 billion times. |

Compiler X runs 1 billion times faster than Compiler Y, because

compilers are allowed to skip work that has no effect on program outputs

("dead code elimination"). |

Benchmarks should print something.

|

2. Answers are printed, but not checked, because

Minor Floating Point Differences are expected. |

- What if Minor Floating Point Difference sends it down Utterly Different Program Path?

- If the program hits an error condition, it might "finish" twice as fast because half the work was

never attempted.

|

Answers should be validated, within some sort of tolerance.

|

3. The benchmark is already compiled.

Just download and run. |

You may want to compare new hardware, new operating systems, new compilers. |

Source code benchmarks allow a broader range of systems to be tested. |

4. The benchmark is portable.

Just use compiler X and operating system Y. |

You may want to compare other compilers and other operating systems. |

Test across multiple compilers and OS versions prior to release. |

5. The benchmark measures X. |

Has this been checked?

If not, measurements may be dominated by benchmark setup time, rather than the intended operations. |

Analyze profile data prior to release, verify what it measures. |

6. The benchmark is a slightly modified version of Well Known Benchmark. |

- Is there an exact writeup of the modifications?

- Did the modifications break comparability?

|

Someone should check.

Create a process to do so. |

7. The benchmark does not have a Run Rules document, because it is obvious how to run

it correctly. |

Although "obvious" now, questions may come up.

A change that seems innocent to one person may surprise another. |

Explicit rules improve the likelihood that results can be meaningfully compared. |

8. The benchmark is a collection of low-level operations representing X. |

How do you know that it is representative? |

Prefer benchmarks that are derived from real applications. |

Q4. Should I benchmark my own application?

Yes, if you can; but it may be be difficult.

Ideally, the best comparison test for systems would be your own application with your own workload.

Unfortunately, it is often impractical to get a wide set of comparable system measurements

using your own application with your own workload. For example, it may be difficult to extract the application

sections that you want to benchmark, or too difficult to remove confidential information from data sets.

It takes time and effort to create a good benchmark, and it is easy to fall into common

mistakes.

Q5. Should I use a standard benchmark?

Maybe. A standardized benchmarks may provide a reference point, if you use it carefully.

You may find that a standardized benchmark has already been run on systems that you are interested in. Ideally,

that benchmark will provide all the characteristics of Table 1 while avoiding common benchmark

mistakes.

Before you consider the results of a standardized benchmark, you should consider whether it measures

things that are important to your own application characteristics and computing needs. For example, a benchmark

that emphasizes CPU performance will have limited usefulness if your primary concern is network throughput.

A standardized benchmark can serve as a useful reference point, but SPEC does not claim that any standardized benchmark

can replace benchmarking your own actual application when you are selecting vendors or products.

SPEC CPU 2026 Basics

Q6. What does SPEC CPU 2026 measure?

SPEC CPU 2026 benchmark suites focus on compute intensive performance, which means these benchmarks emphasize the

performance of:

- Processor - The CPU chip(s).

- Memory - The memory hierarchy, including caches and main memory.

- Compilers - C, C++, and Fortran compilers, including optimizers.

SPEC CPU 2026 intentionally depends on all three of the above - not just the processor.

SPEC CPU 2026 benchmark suites are not intended to stress other computer components such as networking, graphics, Java libraries, or

the I/O system. Note that there are other SPEC

benchmarks that focus on those areas.

Q7.Should I use CPU 2026? Why or why not?

SPEC CPU 2026 benchmark suites provide a comparative measure of integer and/or floating point compute intensive performance.

If this matches with the type of workloads you are interested in, SPEC CPU 2026 benchmark suites provide a good reference point.

Other advantages to using the SPEC CPU 2026 benchmark suite include:

- Most of the benchmark programs are drawn from actual end-user applications, as opposed to being synthetic

benchmarks.

- Multiple vendors use the suite and support it.

- The SPEC CPU 2026 benchmark suite is highly portable.

- Results are available

at https://www.spec.org/cpu2026/results

- The benchmarks are required to be run and reported according to a set of rules to ensure comparability and

repeatability.

Limitations of SPEC CPU 2026: As described above, the ideal benchmark for vendor

or product selection would be your own workload on your own application. Please bear in mind that no standardized

benchmark can provide a perfect model of the realities of your particular system and user community.

Q8. What does SPEC provide?

The SPEC CPU 2026 benchmark suite is distributed as an ISO image that contains:

- Source code for the benchmarks

- Data sets

- A tool set for compiling, running, validating and reporting on the benchmarks

- Pre-compiled tools for a variety of operating systems

- Source code for the SPEC CPU 2026 tools, for systems not covered by the pre-compiled tools

- Documentation

- Run and reporting rules

The documentation is also available at

www.spec.org/cpu2026/Docs/

including installation guides for both Unix-like systems (such as Linux, AIX, and macOS) and Microsoft Windows

systems.

Q9. What must I provide?

Briefly, you will need a computer running Linux, macOS, Unix, or Microsoft Windows with:

- 2 GB of main memory per copy, if you want to run SPECrate

suites; or

64 GB to run SPECspeed suites.

- 250 GB disk space is recommended; a minimal installation needs 10 GB.

- C, C++, and Fortran compilers (or a set of pre-compiled

binaries from another SPEC CPU 2026 user).

- A variety of chips and

operating systems are supported.

The above is only an abbreviated summary. See detail in the System

Requirements document.

Q10. What are the basic steps to run the SPEC CPU 2026 Benchmark Suite?

A one-page summary is in SPEC CPU 2026 Quick Start.

Here is a summary of the summary:

- Meet the System Requirements.

- Install SPEC CPU 2026 [Unix-like systems]

[ Windows systems]

- Find a config file -- a file that defines how to build, run, and report on the SPEC CPU benchmarks in

a particular environment -- and customize it.

- Determine which suite you wish to run.

- Use the runcpu command.

- If you wish to generate results suitable for quoting in public, you will need to carefully study and adhere to

the Run Rules.

Q11: How long does it take to run? Does CPU 2026 take longer than CPU 2017?

Run time depends on the system, suite, compiler, tuning, and how many copies or threads are chosen.

One example system is shown below; your times will differ.

| Example run times - simple options chosen |

| Metric |

Config Tested |

Individual

Benchmarks |

Full Run

(Reportable) |

| SPECrate 2026 Integer |

1 copy |

3 to 5 minutes |

5.4 hours (48 copies; base + peak) |

| SPECrate 2026 Floating Point |

1 copy |

4 to 7 minutes |

9.4 hours (48 copies; base + peak) |

| SPECspeed 2026 Integer |

40 threads |

5 to 13 minutes |

8.3 hours (48 threads; base + peak) |

| SPECspeed 2026 Floating Point |

40 threads |

5 to 13 minutes |

6.5 hours (48 threads; base + peak) |

One arbitrary example using a year 2019 system. Your system will differ.

2 iterations chosen, base and peak. Does not include compile time. |

Does SPEC CPU 2026 take longer than CPU 2017?

- Maybe, though this depends heavily on the system, compiler, tuning, and other tester choices.

| More complicated example: both base + peak, 32 copy rate, 32 thread speed, 3 iterations |

| Metric |

Config Tested |

Full Run

CPU 2017 |

Full Run

SPEC CPU 2026 |

| SPECrate Integer |

32 copies |

14.0 hours |

18.2 hours |

| SPECrate Floating Point |

32 copies |

31.0 hours |

30.0 hours |

| SPECspeed Integer |

32 threads |

13.9 hours |

24.9 hours |

| SPECspeed Floating Point |

32 threads |

9.7 hours |

27.4 hours |

One arbitrary example using a different year 2019 system. Your system will differ.

3 iterations chosen, base and peak. Does not include compile time. |

Another example is discussed in the FAQ

Suites and Benchmarks

Q12. What is a SPEC CPU 2026 "suite"?

A suite is a set of benchmarks that are run as a group to produce one of the overall metrics.

The SPEC CPU 2026 benchmark product includes four suites that focus on different types of compute intensive performance:

Short

Tag |

Suite |

Contents |

Metrics |

How many copies?

What do Higher Scores Mean? |

| intspeed |

SPECspeed®2026 Integer |

13 integer benchmarks |

SPECspeed2026_int_base

SPECspeed2026_int_peak |

SPECspeed suites always run one copy of each benchmark.

Higher scores indicate that less time is needed.

|

| fpspeed |

SPECspeed®2026 Floating Point |

13 floating point benchmarks |

SPECspeed2026_fp_base

SPECspeed2026_fp_peak |

| intrate |

SPECrate®2026 Integer |

14 integer benchmarks |

SPECrate2026_int_base

SPECrate2026_int_peak |

SPECrate suites run multiple concurrent copies of

each benchmark.

The tester selects how many.

Higher scores indicate more throughput (work per unit of time). |

| fprate |

SPECrate®2026 Floating Point |

12 floating point benchmarks |

SPECrate2026_fp_base

SPECrate2026_fp_peak |

The "Short Tag" is the canonical abbreviation for use with runcpu, where context

is defined by the tools. In a published document, context may not be clear.

To avoid ambiguity in published documents, the Suite Name or the Metrics should be spelled as

shown above.

|

Q13. What are the benchmarks?

The SPEC CPU 2026 benchmark product has 52 benchmarks, organized into 4 suites:

SPECrate 2026 Integer SPECspeed 2026 Integer

SPECrate 2026 Floating Point SPECspeed 2026 Floating Point

Benchmark pairs shown as:

7nn.benchmark_r / 8nn.benchmark_s

are similar to each other.

Differences include: compile flags; workload sizes; and run rules.

See: [OpenMP]

[memory]

[rules]

SPECrate®2026

Integer |

SPECspeed®2026

Integer |

Language[1] |

KLOC[2] |

Application Area |

| |

801.xz_s |

CXX,C |

53 |

Data compression |

| 706.stockfish_r |

|

CXX |

13 |

Game / AI (chess) - alpha-beta tree search, neural network |

| 707.ntest_r |

807.ntest_s |

CXX |

16 |

Game / AI (othello) |

| 708.sqlite_r |

|

C |

245 |

SQL compiler/interpreter and database |

| 710.omnetpp_r |

|

CXX,C |

224 |

Discrete event modeling - network and queuing simulations |

| 714.cpython_r |

|

C |

747 |

Python interpreter |

| |

817.flac_s |

CXX,C |

57 |

Lossless audio compression |

| 721.gcc_r |

821.gcc_s |

CXX,C |

3,833 |

C language optimizing compiler |

| 723.llvm_r |

823.llvm_s |

CXX,C |

3,167 |

C/C++ language optimizing compiler |

| 727.cppcheck_r |

827.cppcheck_s |

CXX |

287 |

Static analysis of C/C++ code |

| 729.abc_r |

829.abc_s |

CXX,C |

989 |

Sequential logic synthesis and formal verification |

| 734.vpr_r |

834.vpr_s |

CXX,C |

210 |

FPGA place and route |

| 735.gem5_r |

835.gem5_s |

CXX,C |

971 |

Computer architecture simulation |

| |

838.diamond_s |

CXX,C |

239 |

Bioinformatics - metagenomics and protein sequencing |

| |

846.minizinc_s |

CXX,C |

372 |

Constraint programming |

| 750.sealcrypto_r |

|

CXX,C |

39 |

Security and privacy - Homomorphically Encrypted (HE) query |

| 753.ns3_r |

853.ns3_s |

CXX |

942 |

Discrete event network simulator for internet systems |

| |

854.graph500_s |

C |

10 |

Graph analytics |

| 777.zstd_r |

|

C |

58 |

Data compression/decompression |

| |

SPECrate®2026

Floating Point |

SPECspeed®2026

Floating Point |

Language[1] |

KLOC[2] |

Application Area |

| |

800.pot3d_s |

F |

12 |

Solar physics: finite difference method, conjugate gradient solver |

| |

803.sph_exa_s |

CXX |

3 |

Astrophysics - Smoothed Particle Hydrodynamics (SPH) |

| 709.cactus_r |

809.cactus_s |

CXX,C |

187 |

Astrophysics - relativity, finite difference method, time integration |

| |

811.tealeaf_s |

C |

5 |

High energy physics |

| |

816.nab_s |

C |

26 |

Molecular modeling |

| |

820.cloverleaf_s |

F |

10 |

Explicit hydrodynamics |

| 722.palm_r |

822.palm_s |

F |

298 |

Atmospheric science |

| 731.astcenc_r |

|

CXX |

43 |

Image compression - Adaptive Scalable Texture Compression (ASTC) |

| 736.ocio_r |

|

CXX |

183 |

Color management for visual effects and animation |

| 737.gmsh_r |

|

CXX,C |

721 |

Finite element mesh generation |

| 748.flightdm_r |

|

CXX |

100 |

Flight dynamics models for aeronautics |

| 749.fotonik3d_r |

849.fotonik3d_s |

F |

15 |

Computational Electromagnetics (CEM) |

| |

857.namd_s |

CXX |

9 |

Classical molecular dynamics simulation |

| 765.roms_r |

865.roms_s |

F |

585 |

Regional ocean modeling |

| 766.femflow_r |

|

CXX |

2,505 |

Fluid dynamics: high-order finite element method |

| 767.nest_r |

867.nest_s |

CXX |

208 |

Neuroscience simulator for spiking neural network models |

| 772.marian_r |

872.marian_s |

CXX |

219 |

Neural machine translation for written language |

| 782.lbm_r |

|

C |

1 |

Computational fluid dynamics, Lattice Boltzmann Method |

| |

881.neutron_s |

C |

4 |

Physics simulation of neutron transport in nuclear reactors |

| |

[1] For multi-language benchmarks, the first one listed

determines library and link options (details) |

| |

[2] KLOC = line count in thousands. Includes all src/

files; includes comments and blank lines. |

Q14. Are 7nn.benchmark and 8nn.benchmark different?

Some of the benchmarks in the table above are part of a pair:

7nn.benchmark_r for the SPECrate version

8nn.benchmark_s for the SPECspeed version

Benchmarks within a pair share (most of) their source code.

Differences include:

- Workloads: The input data sets differ, with the SPECspeed version typically solving a more complex

problem.

- Memory: The SPECrate benchmarks are sized to fit within 2 GB (although your system may vary).

The SPECspeed benchmarks are designed to fit in 64 GB.

- Threading: SPECrate benchmarks use only one thread per copy; most of the SPECspeed benchmarks

use multiple threads.

More detail:

[memory]

[OpenMP]

[rules]

SPEC CPU 2026 Metrics

Q15. What are "SPECspeed" and "SPECrate" metrics?

There are many ways to measure computer performance. Among the most common are:

- Time - For example, seconds to complete a workload.

- Throughput - Work completed per unit of time, for example, jobs per hour.

SPECspeed is a time-based metric; SPECrate is a throughput metric.

| Calculating SPECspeed® Metrics |

Calculating SPECrate® Metrics |

| 1 copy of each benchmark in a suite is run. |

The tester chooses how many concurrent copies to run |

| The tester may choose how the problem is parallelized. |

One thread is used. OpenMP is disabled. |

For each benchmark, a performance ratio is calculated as:

time on a reference machine / time on the SUT |

For each benchmark, a performance ratio is calculated as:

number of copies *

time on a reference machine / time on the SUT |

| Higher scores mean that less time is needed. |

Higher scores mean that more work is done per unit of time. |

Example:

- The reference machine ran 807.ntest_s in 37556 seconds.

- A particular SUT took about 1/12 the time, scoring about 12.

- More precisely: 37556/2956.313 = 12.70

|

Example:

- The reference machine ran 1 copy of 707.ntest_r in 638 seconds.

- A particular SUT ran 4 copies in about half the time, scoring about 8.

- More precisely: 4*(638/294.95) = 8.65

|

For both SPECspeed and SPECrate, in

order to provide some assurance that results are repeatable, the entire process is repeated.

The

tester may choose:

- To run the suite of benchmarks three times, in which case the tools select the medians.

- Or to run twice, in which case the tools select the lower ratios (i.e. slower).

|

|

For both SPECspeed and SPECrate, the selected ratios are averaged using the Geometric

Mean, which is reported as the overall metric.

|

For the energy metrics, the calculations are done the same way, using energy instead of

time in the above formulas.

The reference times and reference energy may be found in the observations posted with

www.spec.org/cpu2026/results/

1,

2,

3, and

4

If you would like more than 3 digits for the reference values, see the CSV versions:

1,

2,

3, and

4.

Q16. What are "base" and "peak" metrics?

SPEC CPU benchmarks are distributed as source code, and must be compiled, which leads to the question:

How should they be compiled? There are many possibilities, ranging from

--debug --no-optimize

at a low end through highly customized optimization and even source code re-writing at a high

end. Any point chosen from that range might seem arbitrary to those whose interests lie at a different point.

Nevertheless, choices must be made.

For the SPEC CPU 2026 benchmark suites, SPEC has chosen to allow two points in the range. The first may be of more interest to those

who prefer a relatively simple build process; the second may be of more interest to those who are willing to invest

more effort in order to achieve better performance.

The base metrics (such as SPECspeed2026_int_base) require that all modules of a given language in a

suite must be compiled using the same flags, in the same order. All reported results must include the base

metric.

The optional peak metrics (such as SPECspeed2026_int_peak) allow greater flexibility.

Different compiler options may be used for each benchmark, and feedback-directed

optimization is allowed.

Options allowed under the base rules are a subset of those allowed under the peak rules. A legal base

result is also legal under the peak rules but a legal peak result is NOT necessarily legal under the base rules.

For more information, see the

SPEC CPU 2026 Run and Reporting Rules.

Q17. Which SPEC CPU 2026 metric should I use?

It depends on your needs; you get to choose, depending on how you use computers, and these choices will

differ from person to person.

Examples:

- A single user running a variety of generic desktop programs may, perhaps, be interested in SPECspeed2026_int_base.

- A group of scientists running customized modeling programs may, perhaps, be interested in

SPECrate2026_fp_peak.

Q18. What is a "reference machine"? Why use one?

SPEC uses a reference machine to normalize the performance metrics used in the SPEC CPU 2026 suites. Each

benchmark is run and measured on this machine to establish a reference time for that benchmark. These times are then used

in the SPEC calculations.

The reference machine is a historical

Lenovo ThinkSystem HR330A with the Ampere eMAG processor. The eMAG chip, introduced in 2019, used the ARMv8 ISA and

supported up to 32 cores.

Note that when comparing any two systems measured with the SPEC CPU 2026 benchmark suites, their performance relative to each

other would remain the same even if a different reference machine was used. This is a consequence of the mathematics

involved in calculating the individual and overall (geometric mean) metrics.

Q19. What's new in the SPEC CPU 2026 Benchmark Suite?

Compared to SPEC CPU 2017, what's new in the SPEC CPU 2026 suites?

a. Benchmarks and Metrics

The recent CPUv8 search program proved remarkably

successful, drawing 33 benchmark candidates into consideration. An impressive 29 advanced past Step 3, with 24 of

those ultimately integrated into the final suite. Many of the submissions originated from projects with strong

open-source community backing, which offered opportunities for collaboration with authors and their communities.

The collaboration accelerated cross-system porting and issue resolution as the benchmarks were tested and

ported.

The resulting benchmarks are diverse and meaningful, including flight simulators used by government

agencies, drug discovery programs vital to efforts such as the COVID-19 vaccine, and a media application that has

won an Academy Award. SPEC's intensive evaluation process didn't just "harden" these benchmarks for SPEC CPU; it also

led to valuable fixes and enhancements that were often directly upstreamed back into the original open-source

projects. The development process stands as a powerful testament to a strong relationship between SPEC CPU

and the open-source world.

- The SPEC CPU 2026 benchmark product includes 4 new suites.

There are 52 new benchmarks. Of these:

- 38 have never before appeared in a SPEC CPU suite.

- 14 are updated from their previous appearances (new workload, new source code, or both).

There are new metrics.

Memory requirements differ vs. previous SPEC CPU suites.

Parallelism can be used for 22 of the 26 SPECspeed

benchmarks. Four types of parallel processing are used:

- OpenMP (13 benchmarks)

- C++ std::thread (6 benchmarks)

- Fortran DO CONCURRENT (1 benchmark)

- And, for the 2 compilers (821.gcc_s

and 823.llvm_s), process-level multi-tasking, because that is how

compilers typically achieve parallelism in real life).

b. Source Code: C18, Fortran-2018, C++2017

|

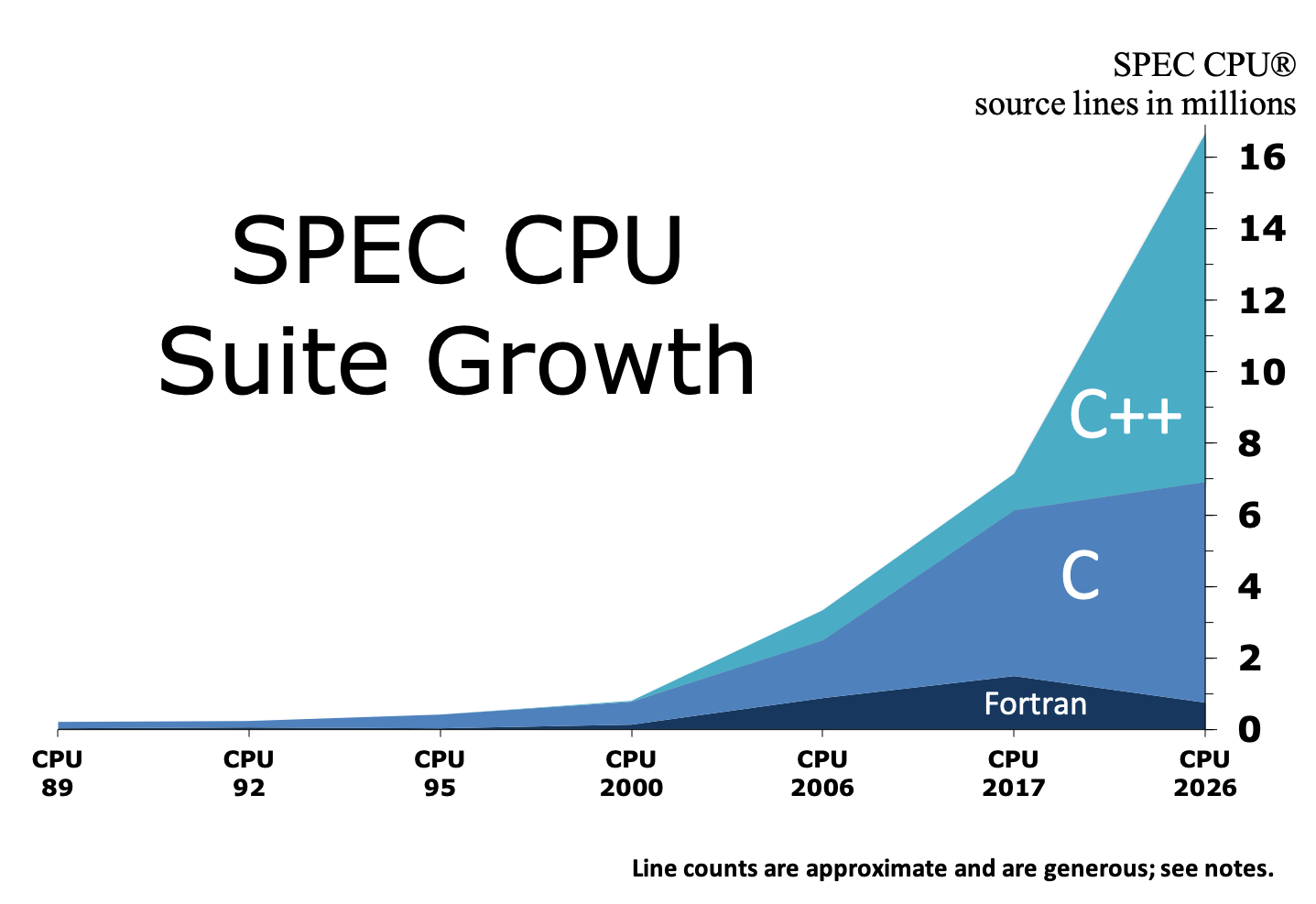

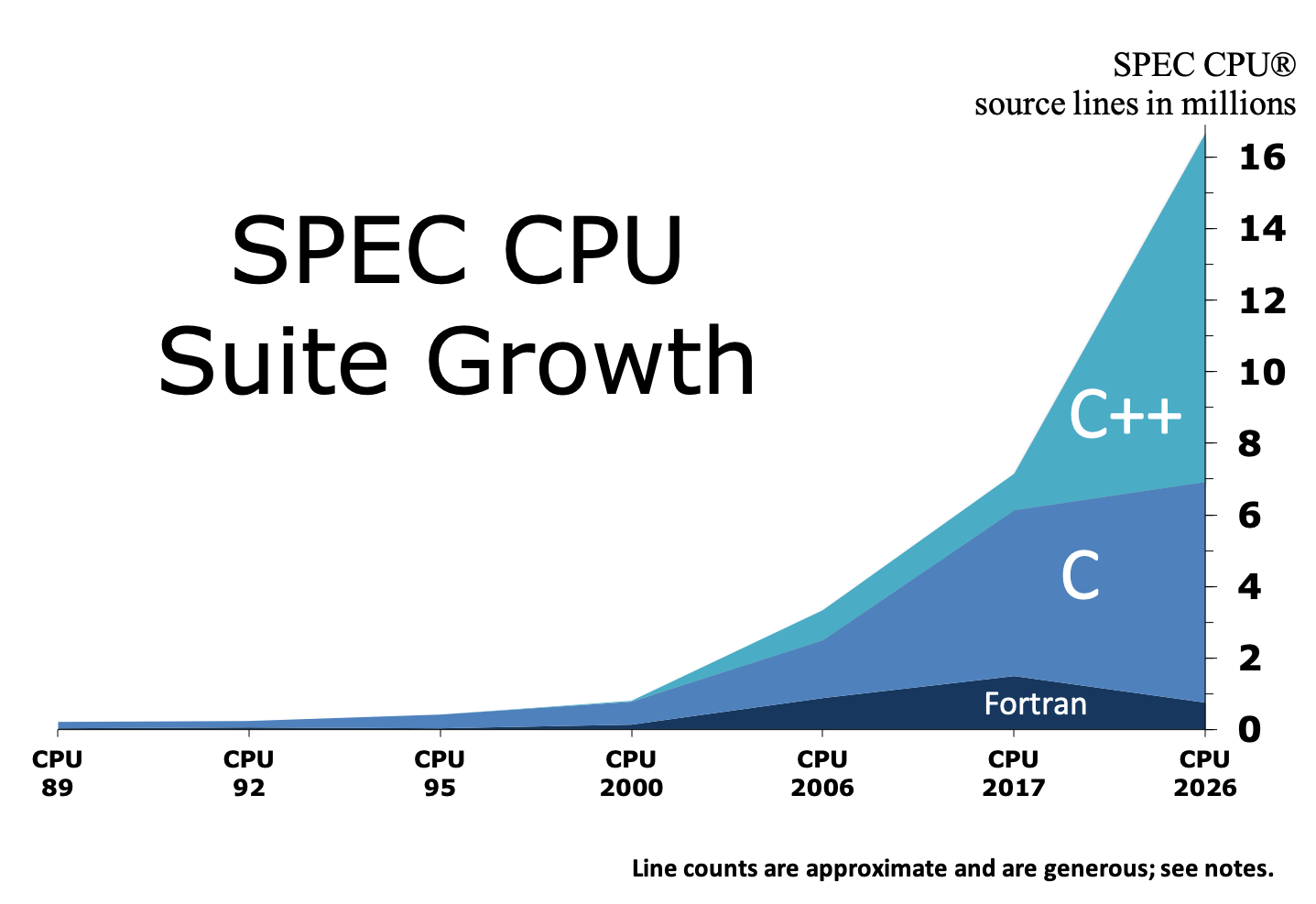

The total source code has increased, as shown in the graph [larger version], because most benchmarks are derived from real

applications, including various open source projects.

During benchmark development, SPEC spends substantial effort working to improve portability, using language standards to

assist in the process. For SPEC CPU 2026 benchmark suites, the standards referenced are C18, Fortran-2018, and C++2017.

Caution: The benchmarks do not always comply perfectly with

ISO/ANSI language standards, because their source code is derived from real applications. The

rules allow optimizers to assume standards *only*

where that does not prevent validation.

|

|

Note that the graph has

generous source estimates: It includes whitespace and comments; it includes all source files in all

CPU/nnn.benchmark/src/ directories, irrespective of whether SPEC CPU Makefiles

reference them. |

c. Rules

Various rules were updated for the CPU 2026 release; see

runrules.html#highlights.

Publishing results

Q20: Where can I find SPEC CPU 2026 results?

Results for measurements submitted to SPEC are available

at https://www.spec.org/cpu2026/results/.

Q21: Can I publish elsewhere? Do the rules still apply?

Yes, SPEC CPU 2026 results can be published independently, and Yes, the rules still apply.

- The SPEC CPU license requires that published results must follow the

SPEC CPU 2026 Run and Reporting Rules.

Note - the above link goes to the copy on the SPEC web site

-- the only official version of the rules with all updates. Your published results must comply with the

latest posted version.

- Any public use of SPEC benchmark results is subject to the SPEC Fair Use Rule, which requires (among other things) that

your statement must be clear; must be correct; must reference public data; must identify data sources; must

clearly distinguish between SPEC metrics and other types of data.

- Any SPEC member may request the rawfile from your result, per

Rule

5.5.

Although you are allowed to publish indpendently, SPEC encourages results to be submitted for publication on

SPEC's web site

because it ensures a peer review

process and uniform presentation of all results.

The Fair Use rule recognizes that Academic and

Research usage of the benchmarks may be less formal; the key requirement is that non-compliant numbers must be

clearly distinguished from rule-compliant results.

SPEC CPU performance results may be estimated, but SPEC CPU energy metrics are not

allowed to be estimated. Estimates must be clearly marked. For more information, see the

SPEC CPU 2026 rule on estimates and the CPU 2026 section of the general SPEC

Fair Use rule.

Transitions

Q22. What will happen to SPEC CPU 2017?

Three months after the announcement of the SPEC CPU 2026 benchmark product, SPEC

will require all CPU 2017 results submitted for publication on SPEC's web site to be accompanied by SPEC CPU 2026 results. Six

months after announcement, SPEC will stop accepting CPU 2017 results for publication on its web site.

After that point, you may continue to use SPEC CPU 2017. You may publish new CPU 2017 results only if you plainly disclose the retirement (the link

includes sample disclosure language).

Q23. Can I convert SPEC CPU 2017 results to SPEC CPU 2026?

There is no formula for converting SPEC CPU 2017 results to SPEC CPU 2026 results and vice versa; they are

different products. There probably will be some correlation between SPEC CPU 2017 and SPEC CPU 2026 results (that is,

machines with higher CPU 2017 results often will have higher SPEC CPU 2026 results), but the correlation will be far

from perfect, because of differences in code, data sets, hardware stressed, metric calculations, and run rules.

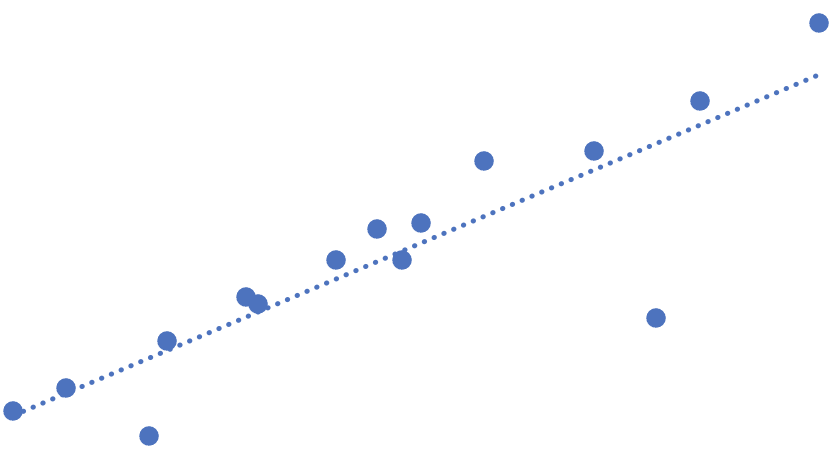

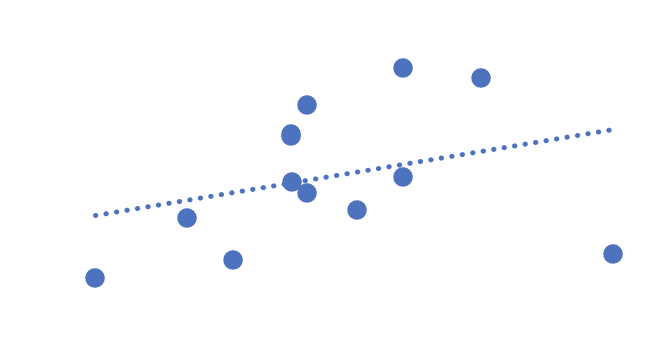

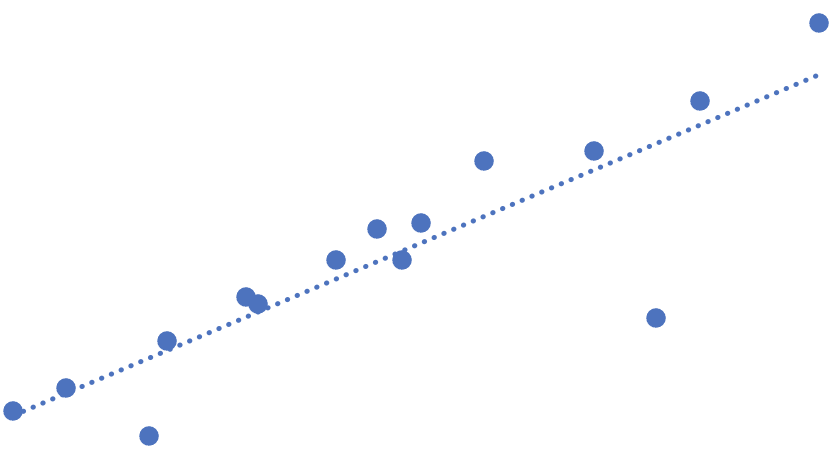

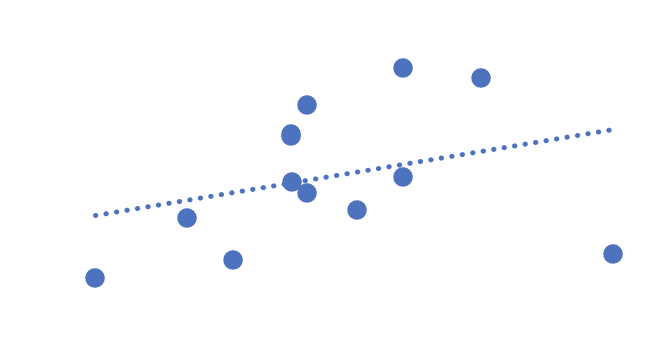

| Weak Trends The graphs on the right illustrate that

although there is some correlation, the correlation is far from perfect. (The graphs are based on testing of

real systems, but used pre-release versions of the SPEC CPU 2026 suites, and therefore the systems, metrics,

and actual values are intentionally not disclosed.) |

|

|

Smaller numbers

For systems that are tested with both the SPEC CPU 2017 and the SPEC CPU 2026

benchmark products, it is normal and expected that the SPEC CPU 2026 score will be a smaller number than the

corresponding SPEC CPU 2017 score, because the reference machine for the new suite

(Ampere eMAG) is faster than the reference machine for the old suite:

SPEC CPU 2026 Benchmark Selection

Q24: What criteria were used to select the benchmarks?

SPEC considered:

- Representativeness: Is it a well-known application or application area?

- Availability of workloads that represent real problems.

- Performance profile: Is the candidate compute bound, spending most of its time in the benchmark source, and little

time in IO and system services?

- Portability to a variety of CPU architectures, including ARM, Power ISA, and x86.

- Portability to various operating systems, including AIX, Linux, macOS, and Windows.

- Memory usage: SPECspeed benchmarks should fit within 64 GB of main memory, and SPECrate benchmarks within 2 GB

per copy.

Q25. Were some benchmarks 'kept' from SPEC CPU 2017?

Although some of the benchmarks from SPEC CPU 2017 are included in SPEC CPU 2026 suites, they have been given different

workloads and/or modified to use newer versions of the source code. Therefore, for example, the SPEC CPU 2026

benchmark 849.fotonik3d_s may perform differently than the SPEC CPU 2017 benchmark 549.fotonik3d_s.

Some benchmarks were not retained because it was not possible to update the source or workload.

Others were left out because SPEC felt that they did not add significant performance information compared to the

other benchmarks under consideration.

Q26. Are the benchmarks comparable to other programs?

Many of the SPEC CPU 2026 benchmarks have been derived from publicly available application programs. The

individual benchmarks in this suite may be similar, but are NOT identical to benchmarks or programs with similar names

which may be available from sources other than SPEC. In particular, SPEC has invested significant effort to improve

portability and to minimize hardware dependencies, to avoid unfairly favoring one hardware platform over another. For

this reason, the application programs in this distribution may perform differently from commercially available versions of

the same application.

Therefore, it is not valid to compare SPEC CPU 2026 benchmark results with anything other than other SPEC

CPU 2026 benchmark results.

Miscellaneous

Q27. Can I run the benchmarks manually?

To generate rule-compliant results, an approved toolset must be used. If several attempts at using the

SPEC-provided tools are not successful, you should contact SPEC for technical support. SPEC may be able

to help you, but this is not always possible -- for example, if you are attempting to build the tools on a platform that

is not available to SPEC.

If you just want to work with the benchmarks and do not care to generate publishable results, SPEC provides

information about how to do so.

Q28. How do I contact SPEC?

SPEC can be contacted in several ways. For general information, including other means of contacting SPEC,

please see SPEC's Web Site at:

https://www.spec.org/

General questions can be emailed to:

info@spec.org

SPEC CPU 2026 Technical Support Questions can be sent to:

cpu2026support@spec.org

Q29. What should I do next?

If you do not have the SPEC CPU 2026 benchmark suite, it is hoped that you will consider ordering it.

If you are ready to get started, please follow one of these two paths:

SPEC CPU®2026 Overview / What's New?:

Copyright © 2017-2026 Standard Performance Evaluation Corporation (SPEC®)